We watched a GC fix his own playbook

The playbook had a gap. The agent found it, by Paul Lacey

A playbook gap no one had noticed

A few weeks ago I was watching one of our clients’ GCs review an agent’s output. The agent had reviewed a contract against the team’s playbook and flagged a clause it wasn’t confident about. Alongside the flag, it showed its reasoning, the chain of logic it had followed to reach its assessment.

The GC read the reasoning and paused. The agent’s thinking had betrayed the actual flaw, but it wasn’t an agent flaw. There was an ambiguity in the playbook itself. The clause language was genuinely unclear on what the team’s preferred position should be in that situation, and the agent’s uncertainty was a faithful reflection of that gap. The GC fixed the output, then fixed the playbook. The problem never came back.

I’ve been thinking about that moment a lot since, because it reframes something fundamental about how supervision works in an agentic model. The GC wasn’t reviewing a contract. He was reviewing the agent’s judgment. And in reviewing the judgment, he found something he wouldn’t have found reviewing the contract alone: a gap in his own team’s institutional knowledge that had probably just been living in people’s brains, possibly inconsistently.

I think that reframing, from reviewing work to reviewing judgment, is the most important design problem in legal AI right now.

The perfection trap

There is a version of the legal AI conversation that goes nowhere productive. It starts with a question about accuracy percentages, moves to edge cases, and ends with the conclusion that agents aren’t ready yet. Come back in eighteen months.

I understand the instinct. Legal work carries real consequences. A missed liability cap, a non-compliant indemnity clause, a data processing agreement that doesn’t match the jurisdiction. These are not abstract risks. They show up in board meetings and regulatory filings. The standard for “good enough” in legal is genuinely high, and it should be.

But I think the perfection frame misses something fundamental about how work actually gets supervised in every other context. No law firm partner reads every word of a junior associate’s contract review from scratch. They check the sections that matter, probe the reasoning on the hard calls, and move on. The junior’s work product isn’t perfect. The partner’s review makes it reliable. The combination of execution and targeted oversight is what produces quality at scale. It always has been.

The GC wasn't reviewing a contract. He was reviewing the agent's judgment.

The question with agents is not whether they produce perfect output. They don’t, and they won’t for a while. The question is whether their output is good enough to supervise efficiently, and whether the supervision experience is designed well enough that a skilled lawyer can catch what matters without re-doing the work from scratch.

That second part, the supervision experience, is where I think the industry has underinvested. Most of the engineering effort in legal AI has gone into making agents smarter. Not enough has gone into making the human oversight layer fast, precise, and genuinely useful.

⚡ What we’re actually building

We have been spending a lot of time on supervision lately. Not supervision as a compliance checkbox or a trust-building exercise during pilots, but supervision as a core product surface that determines whether agentic legal services actually work at enterprise scale.

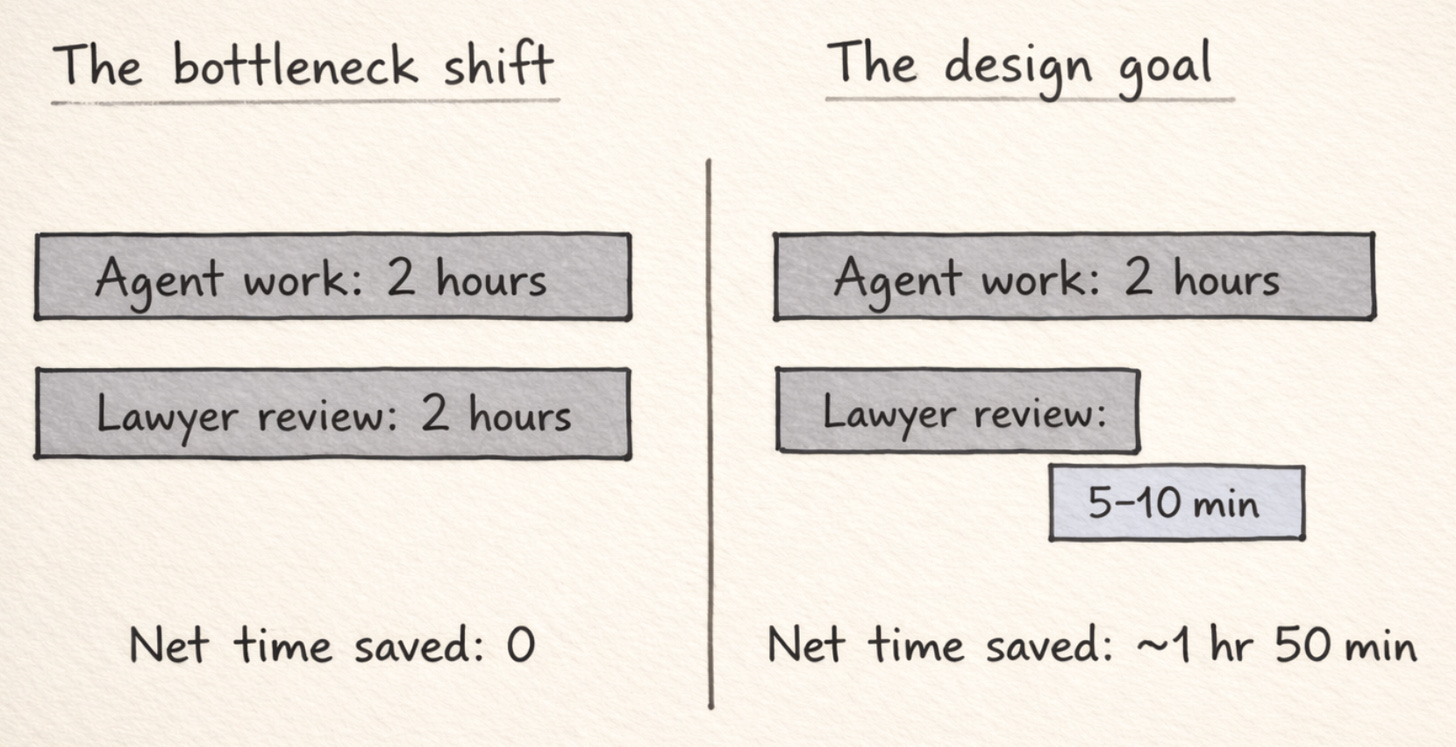

The framing I keep coming back to is this: if an agent does two hours of work and the lawyer spends two hours checking it, you haven’t saved anything. You’ve just moved the bottleneck. The economics only change when the checking takes a fraction of the doing. Five minutes, maybe ten, for work that would have taken a lawyer half a morning.

That ratio is a design problem, not an intelligence problem. Making the agent smarter helps, obviously. But the bigger lever, I find, is making the supervision interface do the right kind of work for the reviewer.

Here is what I mean concretely. When an agent reviews a contract against a playbook, it makes dozens of individual assessments. Clause by clause, it decides whether the language is compliant, whether it falls within acceptable parameters, or whether it deviates in a way that needs attention. Most of those assessments, for a well-configured agent running on a solid playbook, are correct. The reviewer doesn’t need to examine every one.

If an agent does two hours of work and the lawyer spends two hours checking it, you haven't saved anything. You've just moved the bottleneck.

What the reviewer needs is to see the ones that matter. The clauses the agent flagged as non-compliant. The places where the agent’s confidence was low, where it effectively says: I did my best here, but a human should look at this. And critically, the reasoning. Not just the answer, but the chain of logic the agent followed to arrive at it.

I keep describing this internally as “the junior associate model.” A good junior doesn’t just hand you a marked-up contract and say “done.” They walk you through it. They say: I accepted these clauses because they match our standard position. I flagged this indemnity cap because it’s below our threshold but the language is unusual, and I wasn’t sure whether the carve-out changes the analysis. I rejected this limitation of liability because it conflicts with our playbook on consequential damages, and here’s the fallback language I’d suggest.

That’s what targeted supervision looks like. The senior lawyer’s attention goes precisely where it’s needed. The junior’s work product provides the foundation. The senior’s expertise provides the quality guarantee. Neither is doing the other’s job.

We are designing our supervision cockpit around exactly this principle. Surface the non-compliant items. Surface the uncertain items. Show the agent’s reasoning at each step, so the reviewer can assess the quality of the thinking rather than re-doing the thinking from scratch. The goal is to make a lawyer’s review feel less like auditing a black box and more like managing a capable team member who happens to work at three in the morning.

The goal is to make a lawyer's review feel less like auditing a black box and more like managing a capable team member who happens to work at three in the morning.

⚖️ Three ways to supervise, one standard to meet

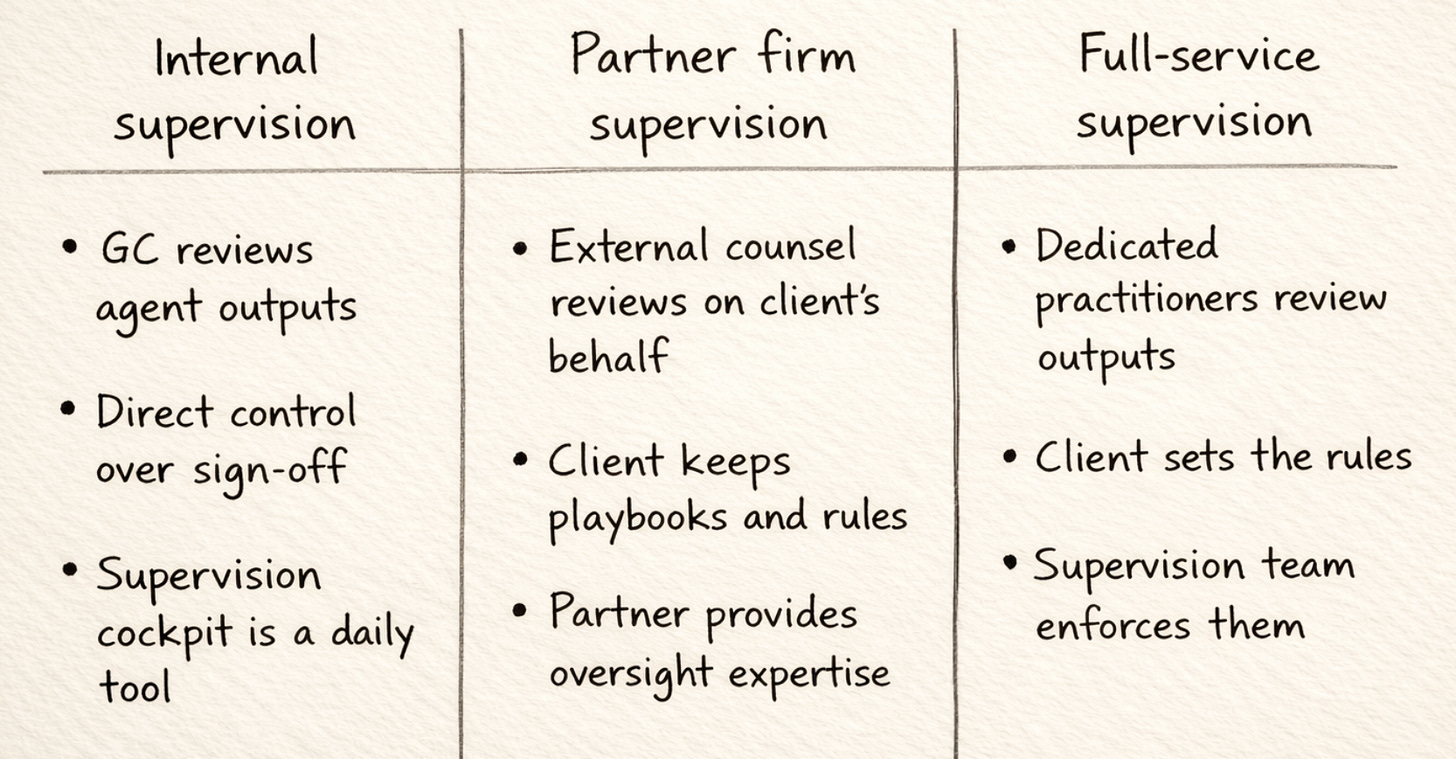

One thing I find interesting about supervision in practice is that different organisations want fundamentally different models for who does the checking.

Some enterprise legal teams want their own lawyers reviewing every agent output, at least initially. The GC wants direct control over what goes out under the legal team’s name. They trust the technology enough to let it do the first pass, but the sign-off stays internal. For these teams, the supervision cockpit is a tool their lawyers use every morning, the same way they’d review a junior’s overnight work.

Other organisations, particularly those without deep bench strength, want a specialist firm handling the oversight. A partner firm runs the supervision layer on the client’s behalf, reviewing agent outputs against the client’s own playbooks and standards. The client gets the economics of automation with the quality assurance of experienced external counsel, without the overhead of a traditional outsourcing arrangement. The institutional knowledge, the playbooks, the escalation rules, those stay with the client. The supervision expertise is provided by the partner.

And some organisations want the supervision handled entirely as part of the service. Experienced legal practitioners, people who understand commercial contracts and the specific nuances of what the agent is doing, review the outputs before they reach the business. The client sets the rules. The supervision team ensures the rules are followed.

All three models need the same thing from the product. A supervision experience that makes it possible to review high volumes of agent work without the review itself becoming the bottleneck. The cockpit design has to work for a GC checking ten items before their first meeting of the day, for a partner firm managing supervision across multiple clients, and for a dedicated supervision team processing hundreds of items a week.

That constraint, building for three different operating models simultaneously, has been one of the more interesting product challenges I’ve worked on. It forces you to think about supervision not as a single workflow but as an information architecture problem. What does every reviewer need to see, regardless of their role? What’s the minimum information required to make a confident approve-or-edit decision? How do you surface risk without creating noise?

The cockpit design has to work for a GC checking ten items before their first meeting of the day, for a partner firm managing supervision across multiple clients, and for a dedicated supervision team processing hundreds of items a week.

🏗️ Why waiting is the riskier bet

I want to address the “not ready yet” objection directly, because I think it gets the risk calculus backwards.

The argument for waiting is intuitive. Agents will get better. Models will improve. Accuracy will increase. If you deploy now, you’re deploying something imperfect. Why not wait until the technology is mature?

The counterargument is less intuitive but, I think, more important. Every month a legal team spends waiting for perfect agents is a month where routine work continues to consume senior lawyer time, the backlog continues to grow, and the organisation builds no muscle memory around what it means to supervise rather than perform.

The supervision skill is not trivial. Learning to review agent output effectively, knowing where to probe and where to trust, calibrating your attention to the risk level of each decision, building playbooks that encode your team’s actual standards rather than the idealised version, all of this takes time and practice. The teams that start now, even with imperfect agents, are building that capability. The teams that wait will have to build it later, under more pressure, with less room to learn.

There is a parallel here with how firms adopted junior associates in the first place. Nobody waited until law schools produced graduates who could handle a full matter independently. You hired juniors, supervised their work, and over time the combination got more efficient. The juniors got better. The supervision got sharper. The firm developed an instinct for what to delegate and what to keep at the senior level.

The teams that start now, even with imperfect agents, are building that capability. The teams that wait will have to build it later, under more pressure, with less room to learn

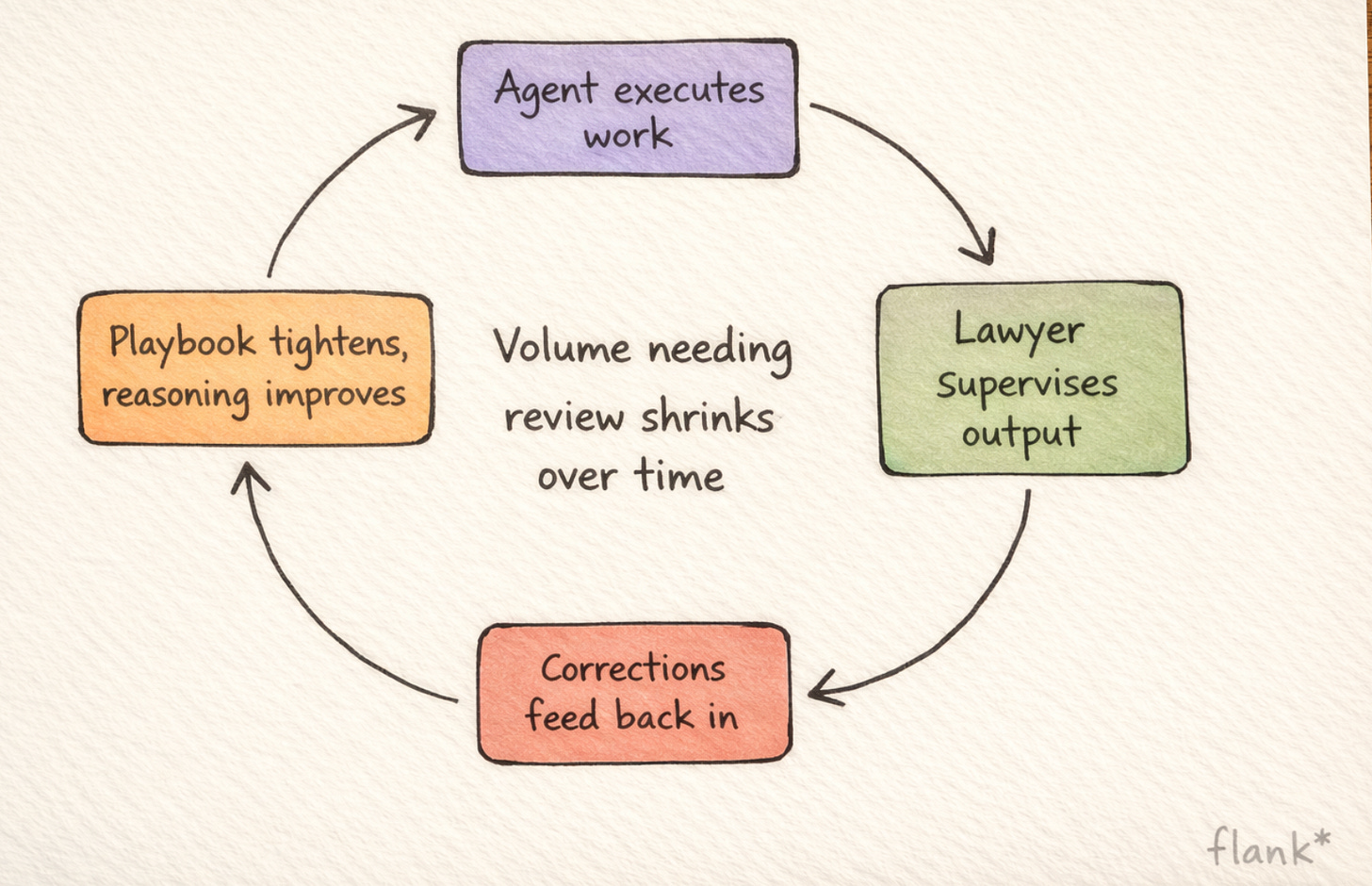

I think the same dynamic applies now. The agents are not yet where they’ll be in two years. But the supervision infrastructure, both the technology and the organisational practice, benefits from being exercised. You learn what your playbooks are missing. You learn which contract types the agent handles reliably and which need closer attention. You learn what good supervision actually looks like for your team, in your context, with your risk tolerance.

And the agent gets better too. Every supervision correction, every edit a lawyer makes to an agent’s output, feeds back into the system. The playbook gets tighter. The agent’s reasoning improves. The volume of items that need attention shrinks over time, not because the agent was designed to be perfect from day one, but because the feedback loop between execution and oversight compounds.

The shift I’m watching

I find that the most interesting conversations I have with legal teams have changed over the past six months. The question used to be: can the agent actually do this? The question now is: how do I make sure it’s doing it right?

That’s a fundamentally different conversation. The first one is about capability. The second is about governance. And the second one is where the real product and organisational design challenges live.

I don’t think supervision is a transitional feature, something you need while the agents are immature that you’ll eventually switch off. I think it’s a permanent part of how legal work gets done in an agentic model. The scope of what gets supervised will narrow over time. The trivial stuff will run autonomously. But the principle, that a skilled human reviews the high-stakes, high-uncertainty, high-consequence decisions, is not going anywhere. The lawyer who spent seven minutes reviewing that supplier agreement wasn’t performing a stopgap function. She was doing her actual job, the version of it that makes the best use of her twelve years of experience.

The design question for us is how to make that seven minutes as information-rich and efficient as possible. The answer is not to hide the complexity. The answer is to organise it so the reviewer’s attention goes exactly where their expertise matters most.

We’re not there yet on every dimension. There are pieces of this we’re still designing and building, and I’d rather be honest about that than pretend the finished product already exists. But the direction is clear, and the early results from teams already using supervised agents have reinforced what I suspected: the constraint on deploying legal agents at scale was never just agent intelligence. It was always, at least equally, about whether the oversight layer was good enough to make imperfect agents genuinely useful.

I think it is. And I think it’s getting better faster than most people expect.

✳️