Claude reviewed the contract. Legal didn't.

AI knows contract law. It doesn't know your company, by Lorna Khemraz

We have also started a Substack. Subscribe to us there to receive more insights.

Fluent, confident, and incomplete

A procurement manager at a manufacturing company needs to clear a supplier agreement before end of quarter. Legal is swamped. A colleague says: just ask Claude, it handles this stuff all the time. She pastes in the indemnity clause and types: “is this acceptable?”

Claude says it looks like a mutual cap at two times contract value, that this is broadly market standard, and that there’s nothing here that should prevent signature.

She signs.

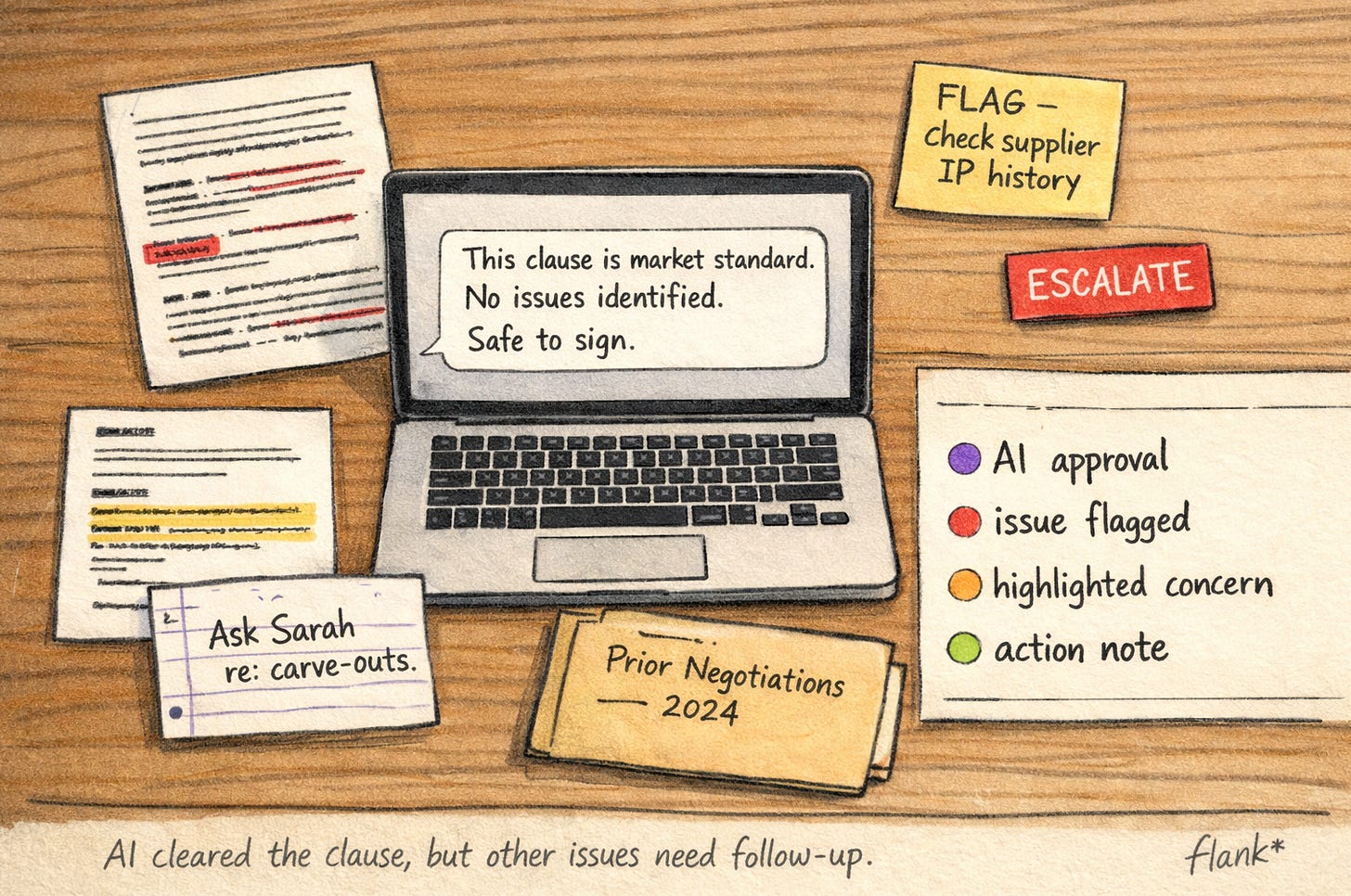

Six months later there is a claim. The clause has a carve-out for IP-related consequential damages that sits in a subordinate sentence and that, as it turns out, her company’s legal team has flagged as problematic in a prior agreement with this supplier’s parent entity. It was not in any written policy. It was in a note from the previous negotiation, referenced in an annotation in a redlined Word document that never made it into a formal playbook, and held primarily in the institutional memory of one mid-level lawyer who had been on that deal two years ago.

Claude answered the question she asked. The question she should have asked was different, and she had no way to know that.

The question she should have asked was different

I find this failure mode worth thinking about carefully, because it is structurally different from the other ways legal AI can go wrong. The accuracy objection, the concern that AI will get the law wrong or misread a clause, is real but increasingly well-understood. Models are getting better. Legal-specific fine-tuning is improving. The “what if it makes a mistake” question has become a manageable risk with the right supervision architecture.

The problem I am describing is subtler, and I think harder to fix with model improvements alone. It is not that the AI got the answer wrong. It is that the AI answered a version of the question that was narrower than the question that actually needed answering, and neither the user nor the AI had any mechanism to notice the gap.

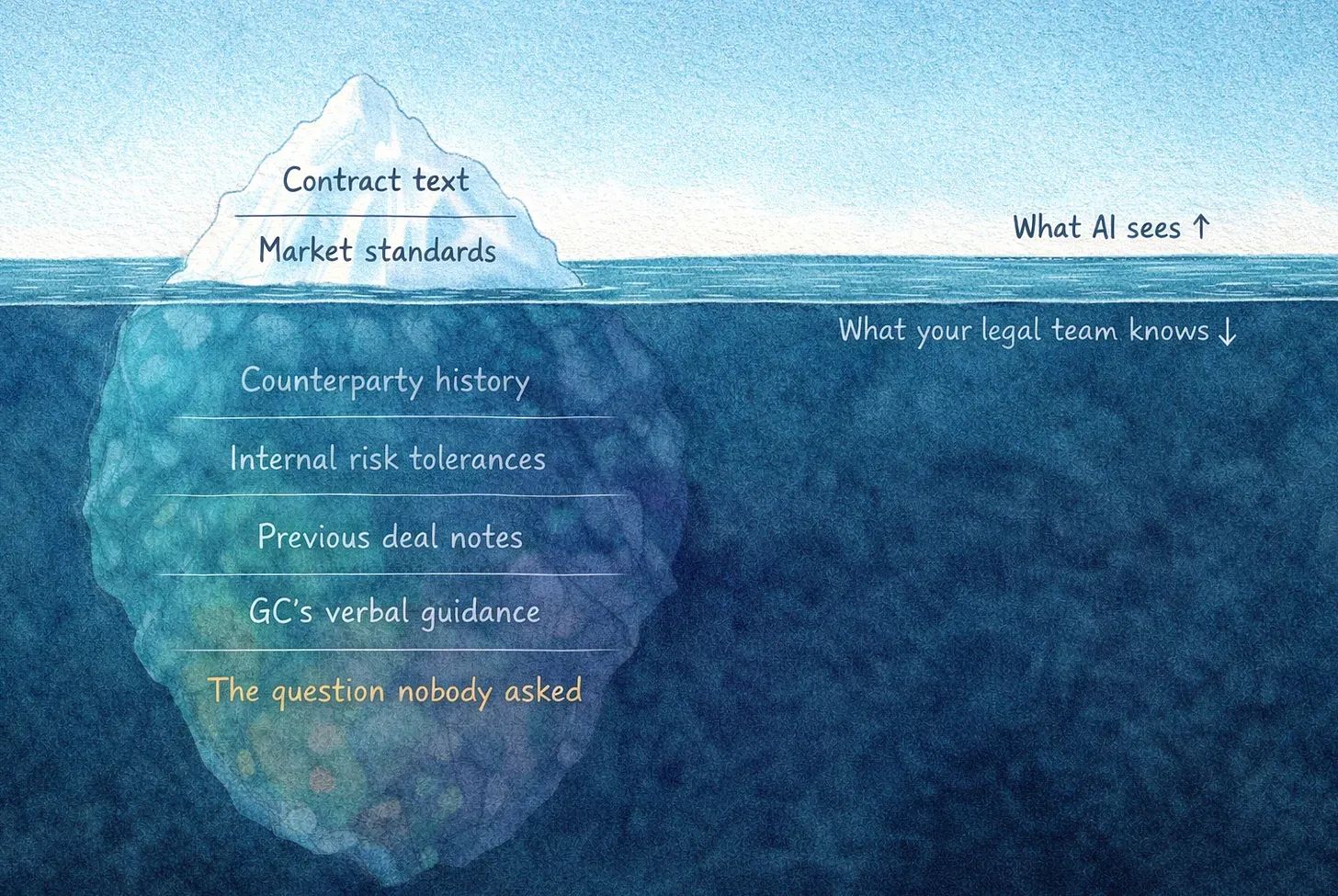

Horizontal AI, whether that is Claude, Copilot, or Gemini, is extraordinarily capable at the question in front of it. It knows contract law. It knows market standards. It can identify problematic clauses, compare language against benchmarks, and explain what a given provision means in plain terms. What it does not know is anything that is specific to your organisation’s history with this counterparty, the deals that went sideways and why, the specific risk tolerances your board settled on after the last significant claim, the internal guidance your GC issued that never made it to a formal document, or the question your legal team would have thought to ask before anyone else did.

This is not a gap that scales away. It is structural.

It is not that the AI got the answer wrong. It is that the AI answered a version of the question that was narrower than the question that actually needed answering.

What your legal team actually does

Legal work inside an organisation is not primarily about knowing the law. Most of the law that governs routine contracting is stable, well-documented, and something a general model can handle competently. The harder layer is institutional knowledge: understanding which counterparties carry specific risk histories, which contract types are sensitive beyond what the language suggests, which business units have patterns of bringing things to legal too late, and which escalation paths matter for this specific agreement. That knowledge is not in any training dataset. It lives in the legal team.

A general-purpose AI model has no access to that layer. It cannot have access to it, because the layer is proprietary, unstructured, and in significant part undocumented. It answers based on what it knows about the law and what it can infer from the text in front of it. That is useful, but it is not the same thing as what your legal team does when they review the same clause. Your legal team is not just checking the language against general standards. They are checking it against everything they know about your business, your counterparties, your risk history, and the questions that have gotten people into trouble before. The clause gets filtered through all of that before anyone decides it is acceptable.

The answer comes back fluent, confident, and plausible. Nothing in the output signals that it is incomplete.

When a business user bypasses legal and goes directly to a general model, they are not accessing a faster version of that judgment. They are accessing a different and fundamentally less informed version of it, one that lacks the context that makes legal review valuable in the first place. The answer comes back fluent, confident, and plausible. Nothing in the output signals that it is incomplete. That is, I think, the specific risk: not that the AI is wrong in a detectable way, but that it is wrong in a way that looks right.

📄 This is not about protecting turf

There is a version of this argument that sounds like a defence of legal turf, and I want to be precise about what I am and am not claiming.

I am not claiming that business users should never use AI to get a quick read on a contract. For genuinely low-stakes, low-context questions, a good general model is probably fine and almost certainly better than no review at all. If someone wants to understand what a standard arbitration clause means before a meeting, Claude will explain it accurately.

The appropriate threshold for "this is low-stakes enough to skip the legal team" is much narrower than most organisations currently treat it.

What I am claiming is that the appropriate threshold for “this is low-stakes enough to skip the legal team” is much narrower than most organisations currently treat it. The problem with routing around legal via a general model is not just that the model might be wrong on the law. It is that the model cannot know which questions to ask that the business user did not think to ask, cannot flag the organisational red lines that are nowhere in the document being reviewed, and cannot catch the thing that is technically acceptable by general standards but problematic for this specific company given its specific history and risk appetite. That is not a gap in model capability. It is a gap in available context, and it cannot be bridged by a more capable model.

⚡ The missing category

The Fortune magazine framing that has been circulating in legal tech circles this month describes the market as splitting between “authoritative AI” (grounded in proprietary legal data, deep research corpora, precedent) and “operational AI” (executing workflows, handling volume work). It is a useful lens. But I think it misses a third category that is more relevant to in-house teams: contextual AI. AI that is not just authoritative about the law but about this specific organisation’s interpretation of the law for these specific contract types with these specific counterparties and these specific risk tolerances.

That is what a legal team provides. When they build an AI system around their own playbooks, preferred positions, escalation rules, and institutional red lines, they are not just making a general model more legally sophisticated. They are encoding the context that transforms “is this clause acceptable under English law” into “is this clause acceptable for us, given everything we know.” The output of those two questions is sometimes the same. When it isn’t, the difference tends to be the thing that matters most.

No amount of legal sophistication in a general model compensates for the absence of organisational context.

This is what I find most underappreciated about the vertical versus horizontal distinction in legal AI. The argument for vertical is often made as a capability argument: specialised models are more accurate on legal tasks. That is probably true, but it is not the core point. The core point is that no amount of legal sophistication in a general model compensates for the absence of organisational context. You can have the most legally capable AI in the world and still get the wrong answer if the AI does not know what your legal team knows about why this particular clause, in this particular agreement, with this particular supplier, warrants more scrutiny than it appears to need.

🔒 Silent failures don't trigger governance

What makes this genuinely difficult to manage is that the risk is invisible until it isn’t. The procurement manager in my opening scenario had no feedback that told her the AI had answered a different question than the one that needed answering. The output was complete, professional, and internally consistent. There was nothing in it that flagged the gap between what Claude knew and what her legal team would have known. The failure was silent, and silent failures are the hardest kind to build governance around.

I think this is the honest case for keeping the legal team in the loop on anything beyond genuinely trivial requests, not because the AI cannot read a contract, but because the AI cannot know what the contract should be read against. The legal team is the keeper of that context. The question of whether a clause is acceptable is inseparable from the context in which it is being asked, and the context lives with the people who know the organisation.

Silent failures are the hardest kind to build governance around.

The obvious objection is that this creates exactly the bottleneck that AI is supposed to solve. If everything has to go through legal, the queue doesn’t move. I think that is the right objection, and it points toward the right solution: legal teams building AI systems that encode their own context, their playbooks, their positions, their red lines, rather than business users routing around legal to access a general model. The goal is not to make legal the bottleneck again. It is to give the business access to legal judgment that has been operationalised, deployed into an agent system that knows what to look for and what to flag, without every query requiring a lawyer to be in the loop on the execution.

The difference between that and asking Claude is not speed. Both are fast. The difference is that one of them knows the buried questions. The other answers the question you asked.

The adoption number that hides the risk

I do not know how many organisations are currently in the procurement manager scenario, using general models to get quick answers to legal questions and treating those answers as adequate review. I suspect the number is higher than legal teams realise, because the usage tends to be informal and untracked. What I do think is that the risk profile of that practice is systematically underestimated, not because the AI answers are unreliable in a detectable way, but precisely because they are not. The answer sounds right. It might be right. It is missing a layer of context that would have changed it if that context had been available, and there is no signal in the output that tells you when you are in that situation.

The ACC/Everlaw data showing 52% AI adoption in in-house legal against only 7% seeing a reduction in total cost has, I think, two readings. The optimistic reading, which I wrote about in an earlier piece, is that most tools are not yet agentic enough to move the needle on throughput. That is true. The second reading is that a significant portion of the AI “usage” being counted is exactly this: business users doing informal legal review via general chat models, generating outputs that feel like reviewed documents but carry risks that nobody has mapped. That usage shows up in the adoption numbers. It does not show up in the risk register. Not until something goes wrong.

✳️