The Throughput Problem

The throughput problem nobody is measuring

Legal teams adopted AI faster than anyone predicted. According to the ACC/Everlaw survey, corporate legal AI adoption more than doubled in a single year, from 23% to 52%. Two thirds of in-house teams now expect to reduce their reliance on outside counsel because of the tools they are building internally.

And yet, when you look at what has actually changed, the number is startling. Only 7% report a reduction in total matter cost.

I find this gap worth sitting with, because I think it reveals something structural about the way most legal AI has been deployed so far, something that matters more than any individual vendor's feature list.

What the 7% tells us

The tools work. The Harvard/BCG study showed that copilots deliver roughly 25% speed gains and 40% quality improvements on the tasks they assist with. Those are real numbers. A lawyer using a copilot to review an NDA is genuinely faster than one working without it.

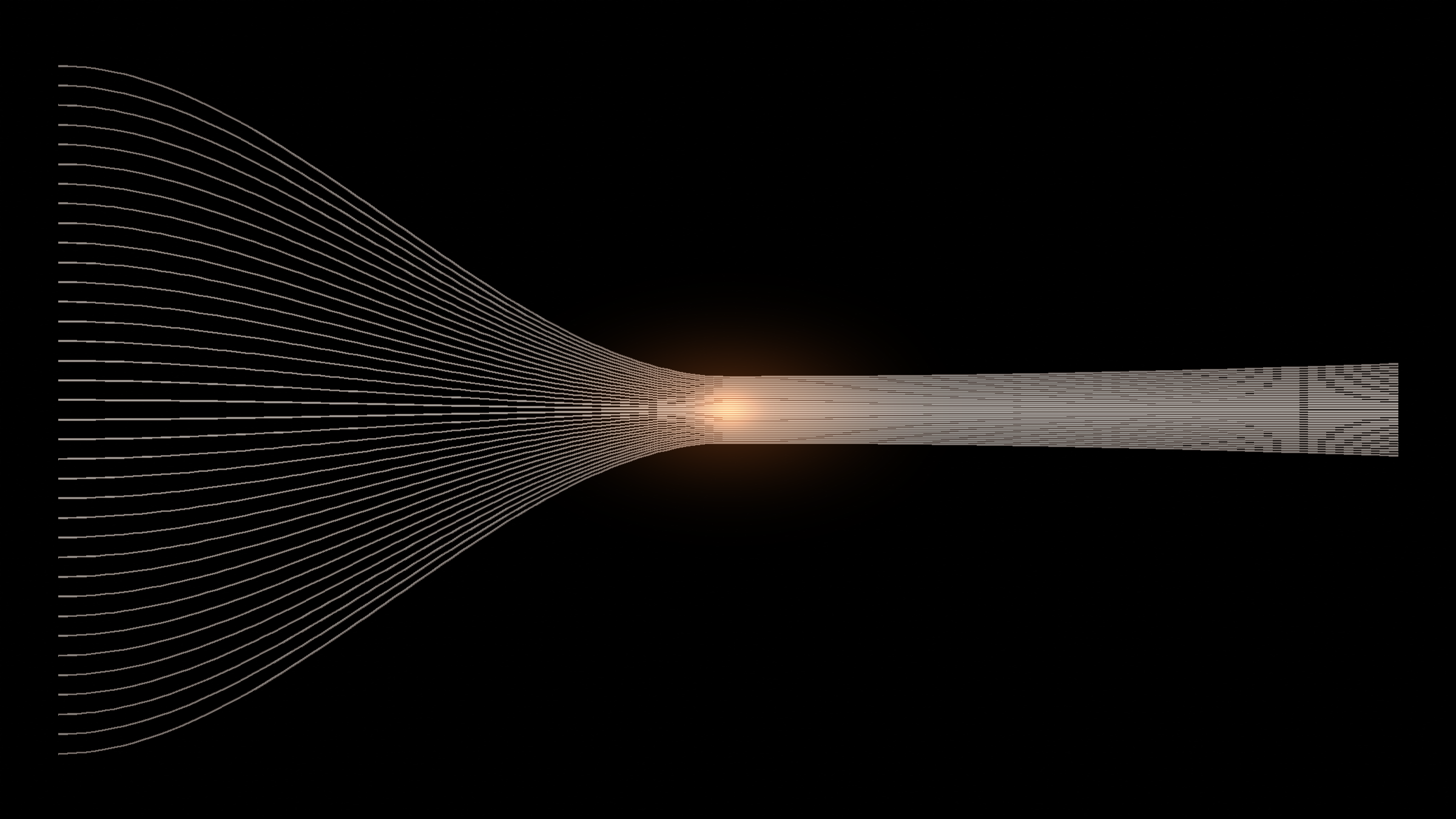

But the throughput of the team has not changed. Every contract still needs a lawyer to open the tool, paste in the document, review the output, make a judgment call, and send the response. The bottleneck has been given a better interface. It has not been moved.

Think about what this looks like concretely. A sales rep in Singapore needs an NDA at 2am local time. The legal team is asleep. There is no copilot in the world that picks up that email, drafts the NDA against the company's template, applies the right jurisdiction-specific clauses, and sends it back. Every copilot requires a human to initiate the work. The email sits in the queue until morning. The deal waits.

That wait, multiplied across thousands of requests per year, is the gap between 64% and 7%.

Why faster lawyers don't fix the queue

There is a version of this argument that sounds like a criticism of the tools themselves, but that misreads the issue. The tools do what they are designed to do: they make individual lawyers more productive on individual tasks.

The problem is that the constraint was never individual task speed. The constraint is how many requests the team can process concurrently, which is bounded by how many lawyers are available to pick work up off the pile. Making each lawyer 25% faster on a given task is an incremental gain. It does not change the staffing model. It does not make legal capacity elastic. It does not mean the business can get an answer at 2am.

I think this is why Forrester's prediction that 25% of planned AI spend will be deferred into 2027 makes sense. Buyers invested in copilots expecting throughput gains, and what they got was per-task speed gains. The ROI case for "my lawyers work slightly faster" is harder to quantify and harder to defend in a budget review than "we handled 40% more requests with the same team."

The word everyone is using

Gartner predicts that 40% of enterprise applications will feature task-specific AI agents by the end of this year, up from less than 5% in 2025. Every legal tech vendor now describes their product as "agentic." The term appears in pitch decks, landing pages, analyst reports. It has become, in the way of enterprise software marketing, almost meaningless.

I think there is a useful distinction buried underneath the marketing, though. It comes down to a simple test: after the AI does its thing, does a lawyer still need to do the work?

If the lawyer opens a Word plugin, pastes in a contract, gets suggestions, and then manually applies them, that is an assistant. A good one, potentially. But an assistant. The lawyer is the operator.

If the lawyer defines the rules, the templates, the fallback positions, and the escalation thresholds, and then work arrives in their inbox already done, reviewed against those rules, with exceptions flagged for their attention, that is something categorically different. The lawyer is a supervisor, not an operator. The distinction is not semantic. It changes what "capacity" means.

What changes when work disappears from the queue

McKinsey now counts 20,000 AI agents as part of its 60,000-strong workforce, with a goal of reaching parity by the end of 2026. This is not a productivity improvement. McKinsey deployed those agents not to make existing consultants faster at their current tasks, but to do work that would otherwise require hiring 20,000 more people.

The parallel in legal is direct. A legal team that deploys agents to handle routine contracting, triage, and Q&A does not just work faster. They work on different things. The backlog of NDAs and vendor agreements stops consuming lawyer hours entirely, not because the lawyers became quicker at processing them, but because the work never reaches their desk in the first place.

The business user's experience changes too, and I think this matters more than most people acknowledge. They send an email. They get a response in minutes. They do not log into a portal, fill out a form, or track a ticket. They do not know or care whether the response came from a human or an agent. They just stopped waiting.

The supervision question

The reasonable objection here is trust. If agents are doing substantive legal work, who is checking the output? This is the right question, and I think the answer determines whether agentic legal AI is a real shift or just a rebranding of the same copilot approach with different marketing.

The model that I find compelling is one where supervision is designed into the product rather than bolted on afterwards. The agent picks up a request, does the work, and routes the completed output to a supervision queue. A lawyer reviews the exceptions, the flagged items, the cases where the agent's confidence was low. Everything else was handled, sent, and logged.

This is not "set it and forget it." It is closer to how a senior lawyer manages a team of paralegals. You do not do the paralegal's work. You check it, correct it when needed, and focus your own time on the problems that require your judgment. The difference is that agents do not take holidays, do not have a maximum concurrent caseload, and do not need three months to onboard.

Where this is heading

I do not think anyone can predict with confidence how fast this transition will play out. Suleyman suggested earlier this year that most legal tasks would be fully automated within 12 to 18 months. Amodei tracks Claude usage at 60% augmentation and 40% automation, with the automation share growing. The Georgetown/Thomson Reuters State of the Legal Market report shows law firm demand forecast declining for the first time, even as profits remain at record highs, because AI is compressing billable hours while 90% of revenue is still time-based.

These are contradictory pressures. The economics of legal services are shifting underneath the current model, but the institutional structures, billing models, career paths, procurement processes, are built for the old one.

What I think is clearer is the direction. Legal teams that figure out how to deploy agents on routine work, genuine agents that execute rather than assist, and pair them with a supervision model that their lawyers actually trust, will have a structural advantage. Not a speed advantage. A capacity advantage. They will be able to absorb more work without proportional headcount growth, respond to the business outside office hours, and redirect their lawyers toward the strategic, novel, high-stakes work that actually requires human judgment.

The 64% who expect this outcome are right about the destination. The 7% who have achieved it suggest that getting there requires something different from the tools most teams have deployed so far.

And wow, I know this sounds derivative of the "faster horses" mantra spouted by marketers ad nauseam with each new technological revolution, but the analogue holds too well not to write about this.