"Faster NDA review" is the wrong job

Why most legal AI pilots solve an activity, not a need, by Taariq Ismail

A customer mentioned something last week over coffee: “I’ve always told myself that we need faster contract review, but I don’t think that’s actually what I need. I just don’t know how else to describe it.”

She runs legal ops and her team handles around 800 contracts a year, maybe a third of which are NDAs. They’d already tried a copilot tool. It worked. Her lawyers were faster. But nothing had changed in any way that mattered to the business. Turnaround times were marginally better, the same lawyers were still doing the same work, and when the CFO asked what the investment had delivered, she didn’t have an answer that landed.

What she was circling around, I think, is something Clayton Christensen would have recognised immediately. Christensen had this framework called Jobs to Be Done. The core idea is that people don’t buy products. They hire them to make progress in a specific situation. His famous example is McDonald’s milkshakes: morning commuters weren’t buying milkshakes because they wanted a milkshake. They were hiring the milkshake to make a boring commute less boring and keep them full until lunch. The competition wasn’t other milkshakes. It was bananas, bagels, and boredom.

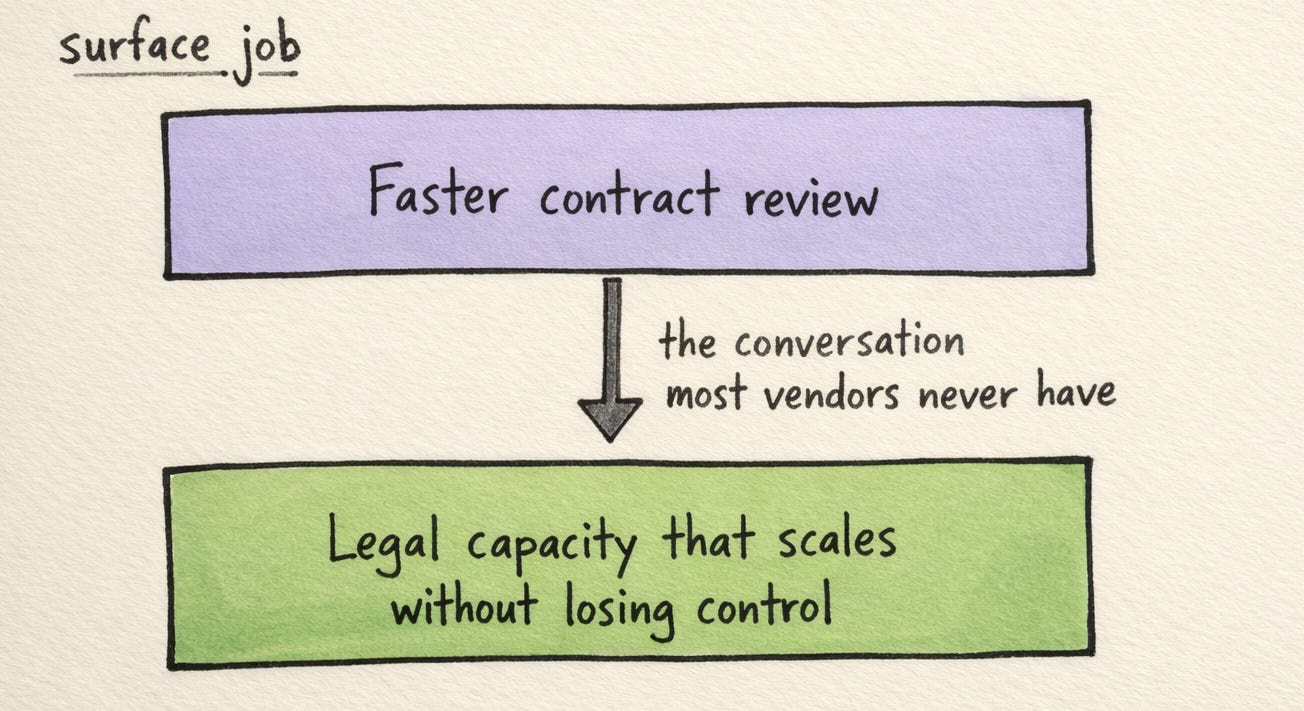

When my prospect said “faster contract review,” she was describing an activity, not a job. The job, once she talked her way into it, sounded more like this: the business sees us as the department that slows things down, I can’t get headcount but the work keeps growing, my best people are burning out on contracts that don’t need their expertise, and I need legal capacity that scales with the business without losing control of quality.

That is a structurally different problem. And it is not one that a faster review tool can solve.

The business sees us as the department that slows things down, I can't get headcount but the work keeps growing, and I need legal capacity that scales without losing control of quality.

⚡ Why buyers describe the wrong job

I’ve been in enough of these conversations now to notice a pattern. Buyers almost always describe their problem in terms of the activities they currently perform. If you’ve spent ten years reviewing NDAs, the problem feels like “NDA review is slow.” The activity is the frame. It takes real conversational work to step outside it and ask what progress the person is actually trying to make.

Vendors make it worse, honestly. “Our tool reviews NDAs 40% faster” is a clean, measurable claim. “We change how your legal department operates” is ambitious, harder to prove in a 30-minute demo, and harder to scope into a pilot. So the market settles into a loop: buyers describe surface-level jobs, vendors sell surface-level solutions, and both sides wonder why adoption stalls after the initial excitement.

Buyers describe surface-level jobs, vendors sell surface-level solutions, and both sides wonder why adoption stalls after the initial excitement.

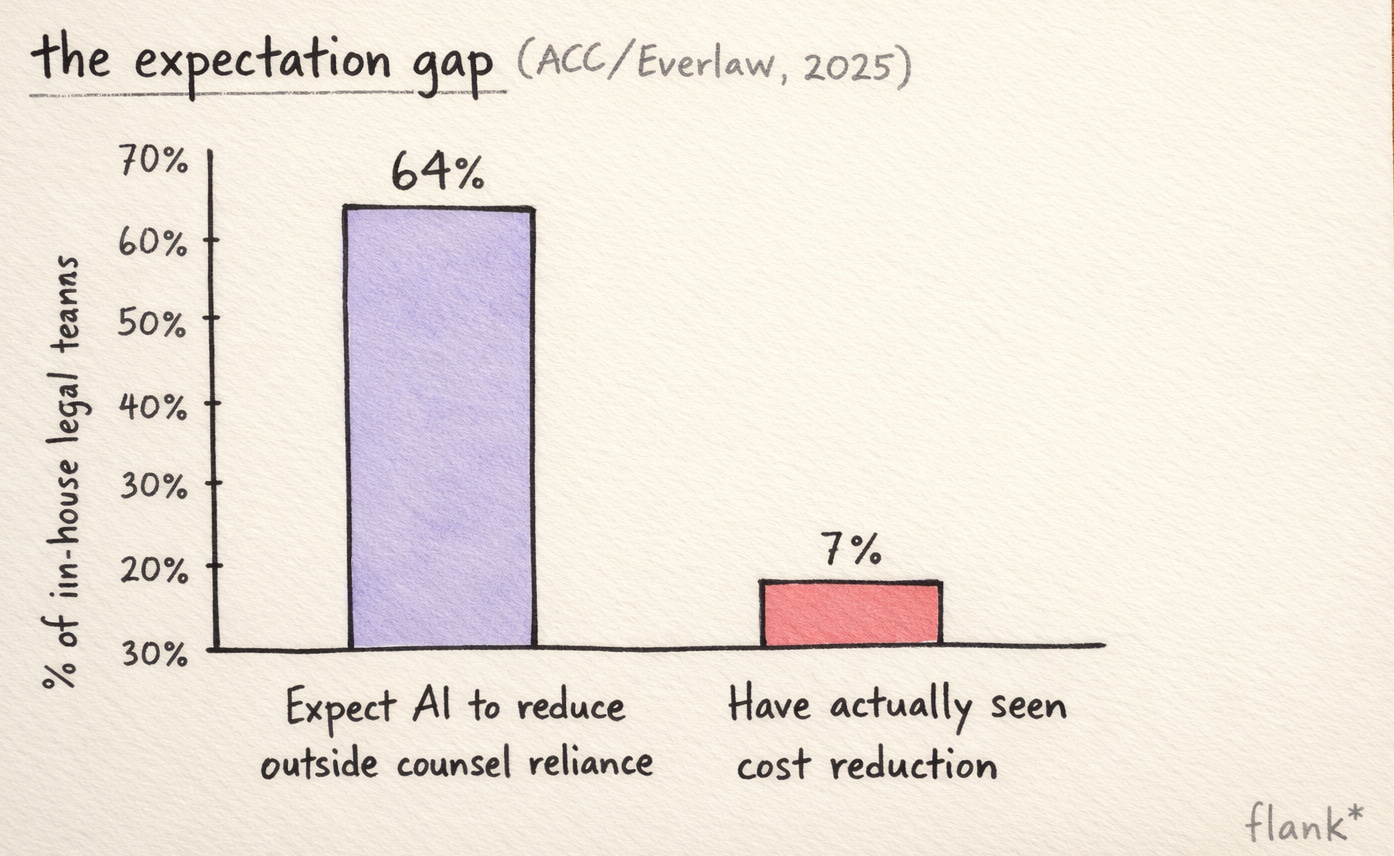

The ACC/Everlaw data makes this visible. 64% of in-house teams expect AI to reduce their reliance on outside counsel. Only 7% have actually seen a reduction in total cost. I think that gap is, in large part, a job-definition problem. The expectation maps to the real job: “change how we absorb work.” The result maps to the surface job: “make our lawyers slightly faster at what they already do.”

What happens when you get the job right

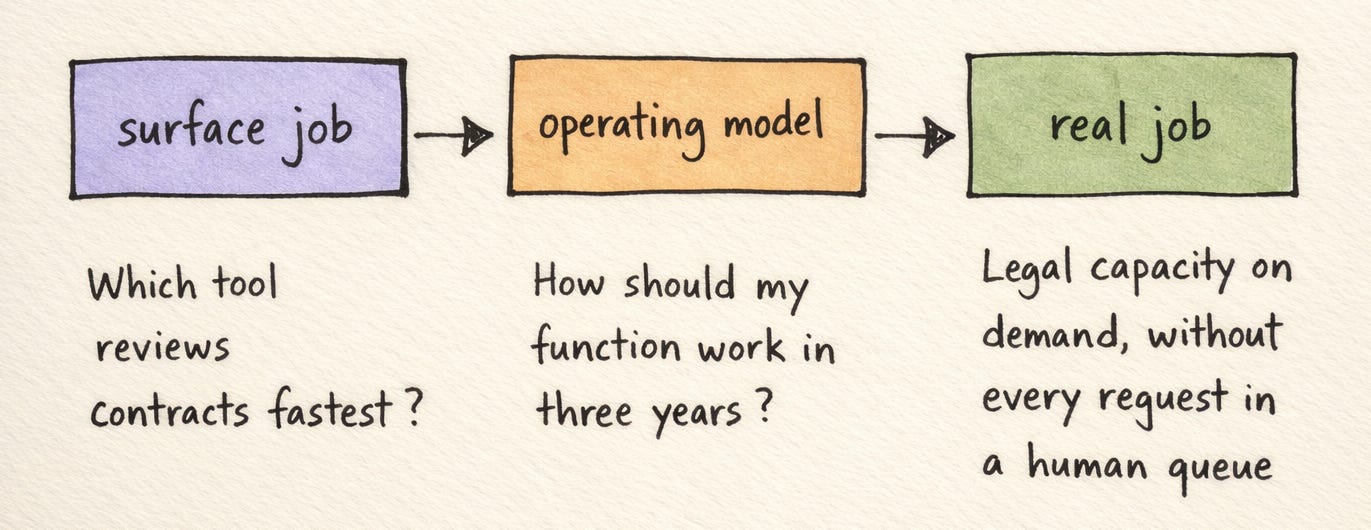

I wrote in my last post about a prospect who used the phrase “engine room” to describe her vision: agents handling the 80% that’s high-volume and low-judgement, lawyers using precision tools for the complex work that falls out. What struck me at the time was that she wasn’t asking “which tool should I buy?” She was asking “how should my function operate in three years?”

That’s the difference between a surface-level job and the real one. The tool question is downstream of the operating model question. And the operating model question is downstream of the job: make legal a service the business can access on demand, without every request sitting in a queue for a human.

When buyers frame the problem at that level, the evaluation criteria shift. The question moves from “which tool reviews contracts fastest?” to “which approach changes the delivery model?” A copilot that makes lawyers 25% faster doesn’t change the delivery model. The business still waits for a lawyer to open the tool, do the work, and respond. The queue gets shorter, marginally. The constraint hasn’t moved.

The tool question is downstream of the operating model question. And the operating model question is downstream of the job.

An agent that picks up the email, does the work, and puts it in a supervision queue for a lawyer to check in the morning changes the delivery model. A request sent at 11pm gets a response before anyone on the legal team starts their day. The constraint has shifted from “how fast can a lawyer work” to “how well have we configured the rules.” Those are different problems with different scaling properties.

🔍 The uncomfortable part of being an advisor

Christensen argued that companies fail at innovation not because they’re stupid but because they listen to their customers too literally. The customer describes what they want in terms of the product they already use. The company builds a better version of that thing. Meanwhile, someone else builds for the actual job and changes the category.

I find this maps directly onto how I think about my role in conversations legal teams. The easiest thing to do, and I’ve done it plenty, is to hear “we need faster NDA review” and respond with a stat of how fast our agent can review an NDA. The buyer nods. The feature works. The pilot goes ahead.

But those pilots often stall. The surface-level job doesn’t generate the kind of organisational commitment that a real deployment requires. A faster NDA review tool is nice to have. It sits in someone’s innovation budget. Changing how the legal department absorbs work is a strategic decision that gets executive attention and real budget.

A faster NDA review tool is nice to have. It sits in someone's innovation budget. Changing how the legal department absorbs work is a strategic decision that gets executive attention and real budget.

The harder thing is to push past the initial description and help the buyer articulate the real job. Sometimes that means asking questions that feel premature. “If we could handle your routine NDAs without a lawyer touching them, what would your team do with that time?” “What’s the actual cost of the backlog, not in lawyer hours, but in deals that closed late?” “When the CFO asks what legal AI has saved you, what number do you want to give?”

These don’t always land well in a first meeting. They can feel presumptuous. The phrasing matters enormously. But the conversations that produce the best outcomes, for the buyer, not just for us, are the ones where we spend the first half hour not talking about the product at all. We talk about what the operating model should look like, what the business actually needs from legal, and where things are falling short. The product conversation follows. And by then, the buyer has a much clearer picture of what they’re hiring the product to do.

Why the stakes are higher now

The argument above could apply to any enterprise software sale. What makes it more urgent with agentic systems is that agents change the nature of the job itself.

With a copilot, the operating model stays the same. The GC still needs lawyers to do the work. The AI makes them faster. When you misidentify the job with a copilot, the downside is modest: you buy a useful tool that doesn’t really transform anything.

With agents, the job the legal team performs shifts underneath them. Work that used to require a lawyer’s active involvement no longer does. The lawyer’s role changes from operator to supervisor. What gets measured changes. How the team relates to the rest of the business changes, because the business stops waiting for a human to pick up their request.

I’ve noticed this in demo conversations over the past few months. The question from prospects has shifted from “Can it actually do this?” to “What can it do?” That’s a meaningful change. The market has moved past scepticism about whether the technology works and into harder territory: understanding what it means for their team if it does work.

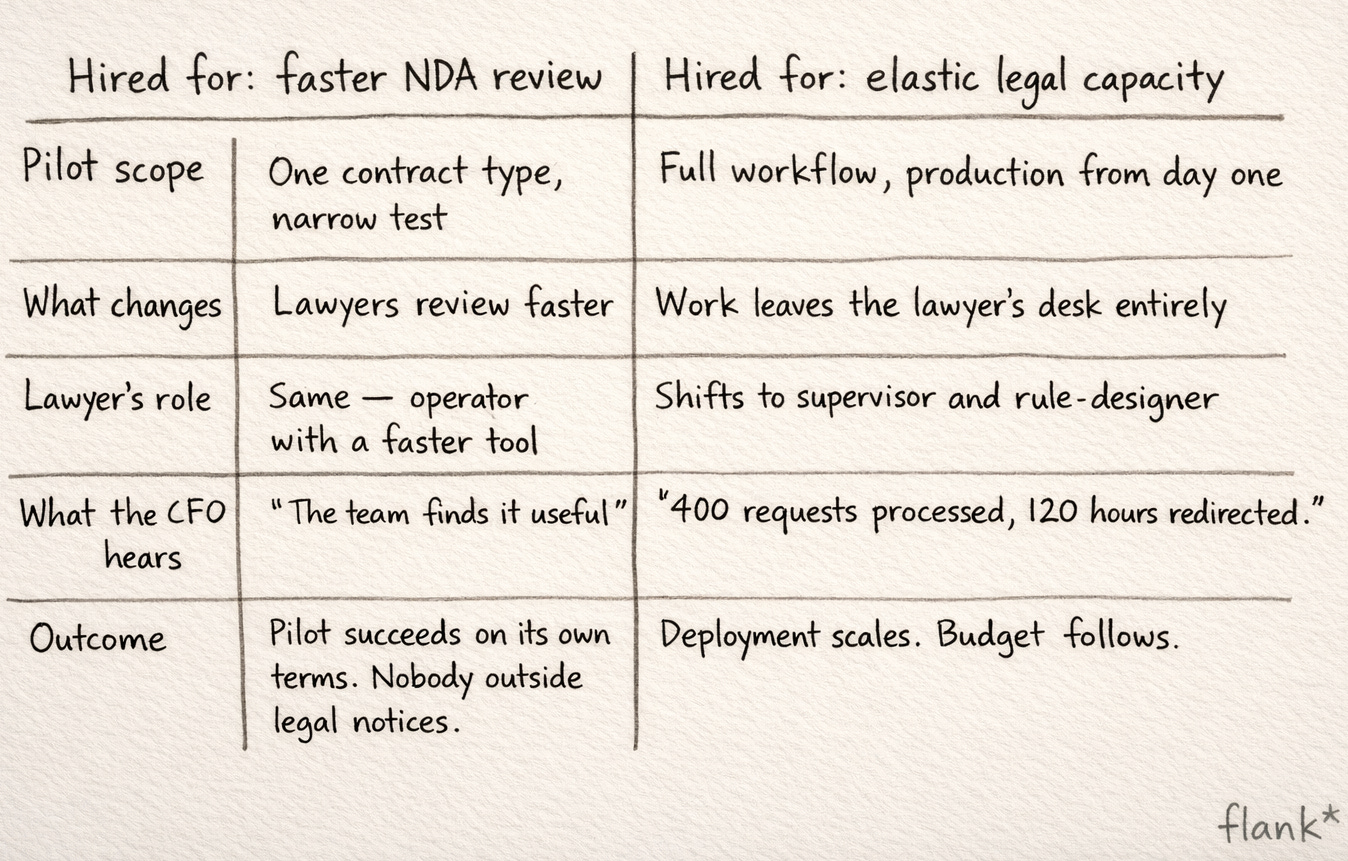

A GC who hires an agent for “faster NDA review” will scope a narrow pilot, see an improvement, maybe expand to a second contract type. But they won’t redesign how their team operates, because the job they defined didn’t call for it. The pilot succeeds on its own terms and fails to produce any result anyone outside the legal team notices.

The market has moved past scepticism about whether the technology works and into harder territory: understanding what it means for their team if it does work.

A GC who hires an agent to make legal capacity elastic, to absorb growing work without proportional headcount, will scope the deployment differently from day one. They’ll think about which work leaves the lawyer’s desk entirely, which gets supervised, and which requires human judgement throughout. They’ll think about what they can tell the CFO in six months. They’ll measure something the business actually cares about: requests handled, turnaround time, hours redirected.

What I haven’t resolved

I want to be honest about a tension in what I’m describing.

The JTBD framework says: understand the real job, help the buyer articulate it, sell against the deeper need. In practice, the real job is large and intimidating. “Change your operating model” is a bigger ask than “try this tool on your NDAs.” There’s a genuine risk that pushing buyers toward the real job too early makes the decision feel too big, and they defer.

So I find myself navigating a balance. Help teams see the real job, because the deployment will fail if it’s scoped against the wrong one. But start with a concrete entry point, one workflow, one contract type, 90 days, because that’s how you build evidence and confidence. The trusted advisor’s job, I think, is holding both at once. A narrow starting point that serves the broader job. A pilot scoped for a measurable result, designed with the awareness that the result is a stepping stone toward a different operating model.

The best conversations I’ve had with legal teams are the ones where we arrive at that framing together. They see the end state. They see that you get there one workflow at a time, each deployment proving the model and funding the next.

In this market six months spent solving the wrong problem is a cost that compounds in ways that are hard to recover from. The window for getting the job right is narrower than it looks. And the penalty for getting it wrong is arriving at the other side of a market transition having built capability against a job the market has already moved past.

The legal team I was working with needed a completely different operational set up; and not just faster NDA review. The advisor’s job, if you take it seriously, is to help teams see that difference before the pilot starts, not after it stalls.

✳️